Three key things crossed my desk this month. An OpenAI research paper, an Anthropic update on Claude usage, and a Chinese court ruling about a quality inspector. Different sources, different geographies but all pointing at the same gaps.

OpenAI published something called the AI Jobs Transition Framework. They mapped roughly 150 million US jobs against near-term AI exposure. The headline numbers split roughly into thirds. About 18% face higher short-term automation risk. 46% will probably see less change. The 36% in the middle could grow, shift, or change shape entirely.

Inside that 18%, the theoretical exposure within those roles is around 90%. Realised exposure is roughly 24%.

So AI could do nine in ten of the tasks within those roles, and in practice it's doing about a quarter of them. That's a big difference and it mirrors the Anthropic paper from earlier in the year that landed on similar territory.

Take the usual grain of salt with it. Both labs have a commercial reason to talk up what their products can do at work. But even with that filter applied, the gap between theoretical and realised use is the interesting bit.

If you're a leader lying awake worrying about AI replacing you or your team, that gap is your breathing room and also your comfort trap. Most organisations aren't using the time to build anything. The economic and productivity impact at scale just isn't visible yet. So the question isn't when does the automation arrive. It's what are we doing with this gap right now.

The skill curve, not the access curve

The second signal came from the Anthropic Economic Index, specifically about learning curves. They've started publishing data on how Claude usage shifts over time. People who've been using Claude for longer are showing roughly a 6.4% higher task success rate than people who've just started.

Extrapolate that out and the question stops being who has access. A lot of people do. Shadow AI is everywhere, with people using tools that haven't been sanctioned by IT. The question becomes who's getting better at using them, month by month.

That's a different problem with a different solution.

You can't solve a skill curve by buying licences and writing a policy. The licence is the starting line, not the finish.

A line in the sand from Beijing

The third one is the Chinese court ruling. A quality inspector got moved to a lower-paid role because, the company argued, AI could do most of his original job. He refused. They dismissed him. He took them to court for unfair dismissal and won.

The court ruled that cheaper AI on its own isn't a valid redundancy justification. They pointed employers towards training, upskilling, reasonable reassignment, and fair compensation when AI changes the shape of a role. No new AI-specific statute. They used existing labour law. It was a civil case, as I understand it, so the broader implications are still being tested.

It's a different legal system to ours. But the signal is still strong.

I'm not sure where this lands long-term. The hot debate is whether courts protecting people from AI-driven cost-cutting is the right mechanism, or whether something more structural is needed. What I am sure of is that using AI as a pure cost-reduction lever is short-sighted.

The whole point of bringing AI into a workplace, to my mind at least, is to make people more capable, unlock capacity, and add value to clients. Companies that miss that are storing up problems.

The conversation I keep having

I was on a Q&A panel in Leeds last week at the AI Confidence Meetup. The questions weren't about which model to pick or which licence to buy. They were about implementation. What practical steps actually work. Which teams succeed and which stall. Where the friction points are.

What's not working is buying a licence and hoping something happens.

A pattern came up more than once. You need senior leadership in the tools, testing them, getting it wrong, learning together with everyone else.

It's uncomfortable. You get to a certain point in leadership and people expect you to have answers. Admitting you're unsure where this goes is hard. But nobody really has the answer yet. The court rulings, the research papers, the panel conversations. Same message from three angles.

The gap isn't the tool. It's the leader's relationship with the tool.

What it looks like when this is done well

In episode four of the show I spoke to Julian Schoemig, who runs a 25-person FinTech called Diesta. He's built internal MCP servers that pull from his company's code base, documentation, and operational logs, so he gets a near-live picture of what's happening in the business. He uses those connections before client meetings to stress-test his thinking. The team uses vibe coding to prototype changes inside meetings that would have taken weeks of dev time a year ago.

Turning up to a meeting with a working prototype, not a slide about a working prototype.

He didn't do this because it's trendy. He did it because the interruption cost of pulling the wrong person out of their flow is high. It takes 15 to 20 minutes to get back into deep work. So he's leaning on AI to protect the team's attention.

The technical solution is the visible bit. Underneath it is a leadership question he's actually answered: how do I lead better, and where does AI belong in how I work. A lot of leaders haven't asked themselves that yet. The technology is too new and they're not close enough to the front line.

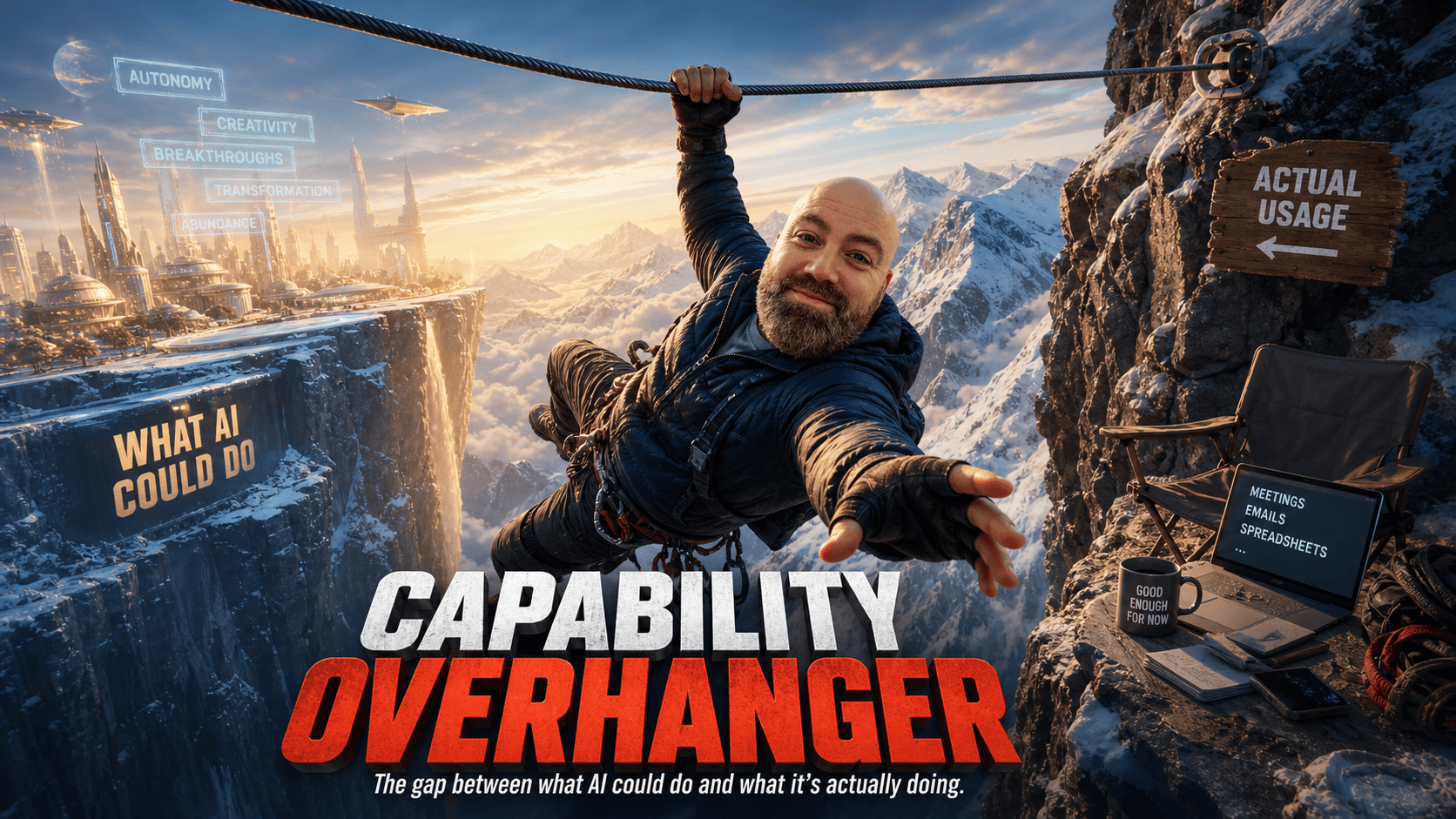

The capability overhang

The phrase I want to borrow from the OpenAI report is capability overhang. It describes the gap between what AI could theoretically do and what it's actually doing. I think it applies just as well to leadership.

Most businesses have a capability overhang inside them right now. The tools can do more than the people inside are getting out of them. The Anthropic data suggests that gap doesn't close on its own. It's a skill curve, which means iterative practice, not familiarity.

If you've bought your team Copilot licences and circulated a policy, you haven't closed the gap. You've started the conversation.

This window isn't permanent. The overhang exists because the tools are still new enough that shallow use produces visible output, which makes it feel like enough. Once the models get capable enough that even shallow use looks impressive, the advantage shifts entirely to people who started learning earlier and went deeper. That's already starting to happen.

The teams I see winning are the ones learning together. Leaders going deep with their people, not delegating the curiosity downwards. It's not a quick fix and a real transformation takes structure and time. But the first domino has to fall in the leader's own working week.

That's it for this month's Frontier Brief. If anything in here landed, or hasn't, I'd genuinely like to hear which bit.

The conversations are usually more useful than the article.

✅ I help senior leaders close the gap between knowing AI matters and knowing what to do about it, starting with decision-making, not technology.

🧭 Get your copy of the Multiply Method at www.theaileaderlab.com/roadmap

It's a FREE roadmap walking you through how to multiply the capabilities of your people and your business with AI.

🗣️ If that's the conversation you want to have, book a call here: https://calendly.com/martin-rawsonellis/30min

📍 Or visit: www.theaileaderlab.com