1.5 hours a week.

That's how long the average senior executive spends using AI at work, according to a study of 6,000 CEOs and C-suite leaders across four countries. I spend longer than that choosing what to watch on Netflix.

It's a number that stuck with me when I read Anthropic's latest research into AI's actual impact at work, because it explains a lot about the gap between what AI can do and what we're doing with it. Anthropic, the company behind Claude, published a paper measuring how AI is affecting jobs. Not predicting. Not speculating. Measuring, using their own usage data alongside US government labour statistics.

The headline finding?

AI's theoretical capability massively outstrips its actual deployment. And the gap isn't small.

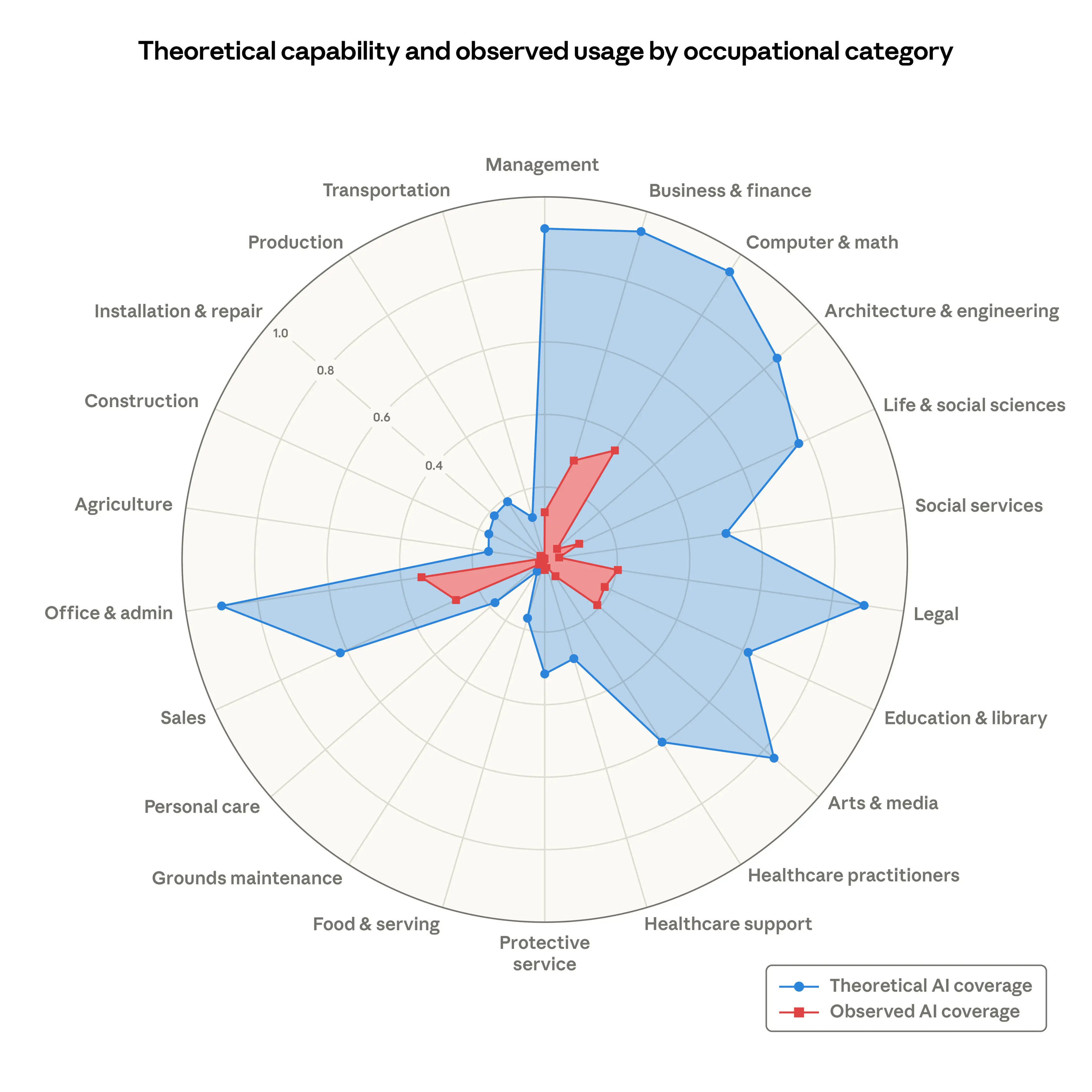

Take computer and maths occupations. According to the researchers, LLMs could theoretically handle 94% of the tasks in those roles. The actual coverage from real-world usage? 33%.

That's a third of what's possible. In the category where AI is arguably strongest.

For office and admin roles, the theoretical exposure sits around 90%. Observed usage? A fraction of that. Business and finance, legal, sales, arts and media... same story across the board. The red area on their radar chart (what's actually happening) barely fills a quarter of the blue area (what's theoretically possible).

So where's the rest going?

Why the gap exists

This is where it gets interesting for anyone running a team or a business.

The researchers flag several reasons why theoretical capability doesn't translate into actual use: model limitations, legal constraints, specific software requirements, human verification steps, and what they politely call "other hurdles."

I'd add a few more from what I'm seeing on the ground. Leadership uncertainty, for one. A quarter of the executives surveyed in the NBER study I referenced earlier weren't using AI in the workplace at all. And the ones who were had barely scratched the surface.

Then there's the compliance question, which is particularly acute in financial services and insurance. Data security, regulatory uncertainty, the sheer novelty of the technology. These aren't irrational objections. They're real barriers that take time to work through.

And honestly? I think there's a large cultural piece too. A lot of organisations still have senior leaders who haven't personally worked with AI in any meaningful way. It's hard to sponsor what you don't understand. Even harder to set direction for your teams when you're not sure where the boundaries are yourself.

We've been here before

This gap between capability and productivity payoff has a name. Robert Solow, the Nobel-winning economist, noticed something odd in 1987: businesses were pouring money into computers, but productivity statistics weren't budging. His observation became known as the Solow Productivity Paradox.

IT investment surged through the 1970s and 80s. Productivity gains didn't show up until the mid-to-late 1990s. Close to two decades of investment before the economic returns became visible.

The parallel to where we are with AI right now is uncomfortable, or maybe reassuring, depending on your temperament. Apollo's chief economist, Torsten Slok, put it bluntly in a recent note: "AI is everywhere except in the incoming macroeconomic data."

The difference this time, though, is that AI capability is advancing faster than early computing did. The question is whether our organisations can keep pace with the technology, or whether we're heading for the same 15-year lag while we figure out how to actually embed it.

What the data says about who's most exposed

The Anthropic report identifies the occupations with highest observed exposure. Computer programmers top the list at 75% task coverage. Customer service representatives sit at 70%. Data entry specialists at 67%. Market research analysts, financial analysts, software QA testers all feature.

What's striking is the demographic profile of the most exposed workers. Compared to unexposed workers, they're more likely to be female, white, better educated, and significantly higher paid (47% more on average). Graduate degree holders are four times more represented in the exposed group.

This isn't a blue-collar displacement story. Not yet, anyway.

30% of workers have zero AI exposure in their daily tasks. Cooks, mechanics, bartenders, lifeguards. The people whose jobs involve being physically present, doing physical things. The gap between the most and least exposed workforces isn't narrowing. If anything, it's becoming more defined.

The young worker signal

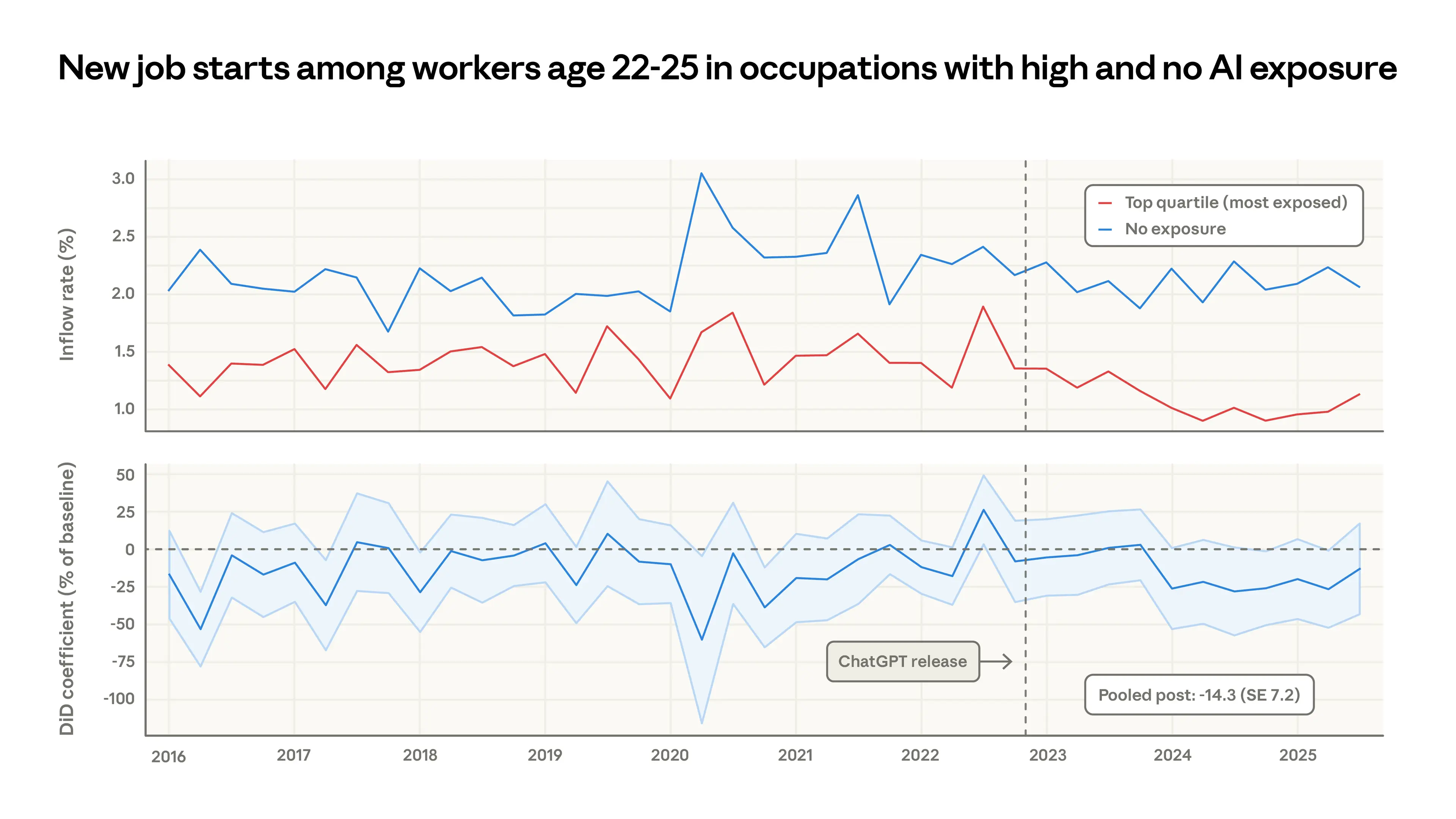

The researchers found no systematic increase in unemployment for highly exposed workers since late 2022. That sounds reassuring until you look at the age breakdown.

For workers aged 22-25, hiring into exposed occupations has dropped roughly 14% since ChatGPT launched. Job finding rates in less exposed occupations stayed flat. This pattern doesn't appear for workers over 25.

The researchers are careful to note this is suggestive, not conclusive. Young workers might be staying in existing jobs, taking different roles, or going back to education. But it's still a a signal.

IBM seems to have noticed something similar and decided to act. They recently announced they're tripling their intake of young hires, reasoning that automating entry-level work today creates a leadership pipeline problem tomorrow. You can't develop mid-level managers if you never hired them at the junior level.

I think that's probably the sharpest insight in this whole conversation. The entry-level roles that feel most automatable are often the same roles that develop the judgement, context and institutional knowledge that makes someone effective five or ten years later. Automate too aggressively at the bottom and you hollow out the middle.

So what does this actually mean?

I'm not going to pretend I've got a neat five-point plan. But a few things keep coming back to me.

The gap between theoretical capability and actual deployment is enormous. That gap represents either wasted potential or a sensible pace of adoption, depending on your perspective. Probably a bit of both.

For the leaders I work with, the practical question isn't "will AI affect my industry?" (it will) or "when will AI replace my team?" (probably not how you think). It's more like: what would it take to move from 1.5 hours a week to something that actually changes how decisions get made?

That's a cultural shift, not a technology deployment. It means leaders getting hands-on themselves, not just sponsoring an AI workstream and hoping the PowerPoint deck looks good at the next board meeting. It means rethinking entry-level roles rather than just automating them. And it probably means accepting that, like the computer revolution before it, the productivity returns won't arrive on the timeline the vendors are promising.

The Solow Paradox eventually resolved. Productivity did catch up. But only after organisations fundamentally changed how they worked, not just what tools they used.

I suspect AI will follow a similar pattern albeit slightly faster. The question is whether your organisation will be in the group that figured it out early, or the group that's still waiting for the technology to do the work for them.

Still working that one out myself, if I'm honest.

The Anthropic report "Labor market impacts of AI: A new measure and early evidence" by Massenkoff and McCrory was published on 5 March 2026.